I just deleted 150 lines of code by adding one optional parameter. Here’s the pattern.

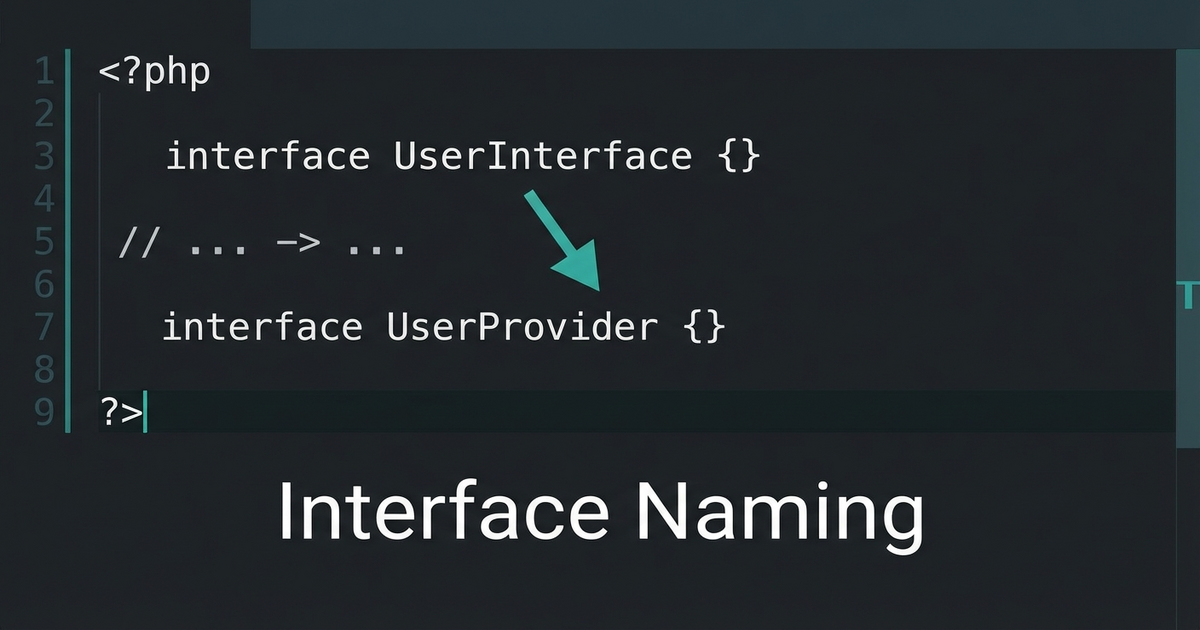

The Duplicate Method Problem

You have a method that works great. Then a new requirement comes in that’s almost the same, but with a slight twist. So you copy the method, tweak it, and now you have two methods that are 90% identical.

public function getLabel(): string

{

return $this->name . ' (' . $this->code . ')';

}

public function getLabelForExport(): string

{

return $this->name . ' - ' . $this->code;

}

public function getLabelWithPrefix(): string

{

return strtoupper($this->code) . ': ' . $this->name;

}Three methods. Three variations of essentially the same thing. And every time the underlying logic changes, you update all three (or forget one).

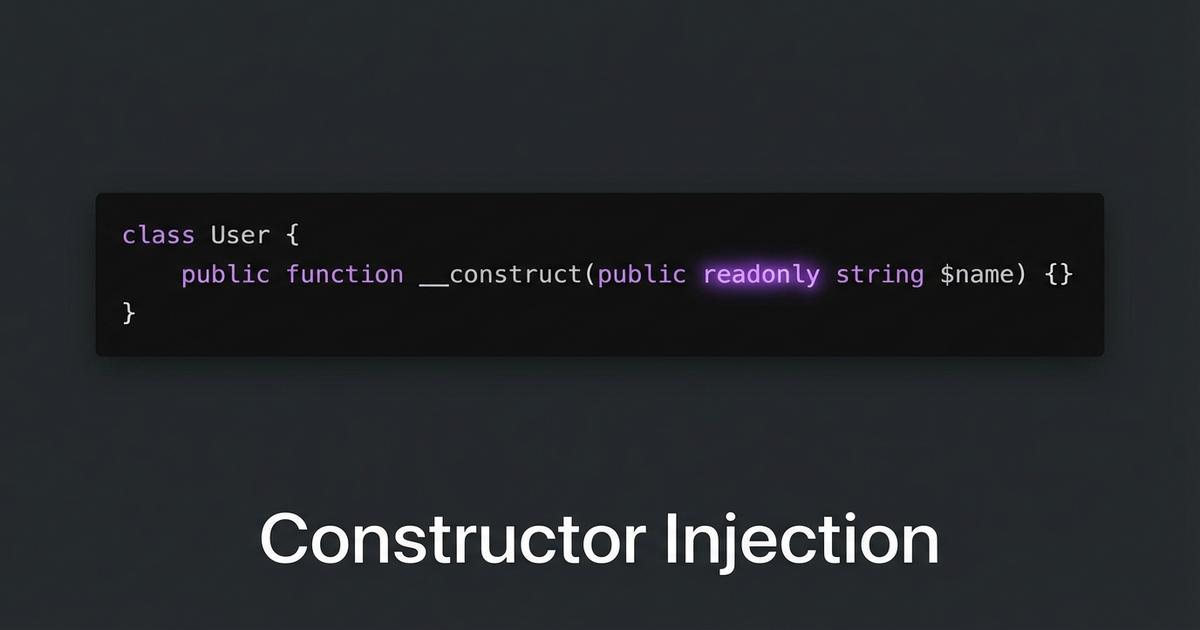

Add a Parameter Instead

public function getLabel(string $format = 'default'): string

{

return match ($format) {

'export' => $this->name . ' - ' . $this->code,

'prefix' => strtoupper($this->code) . ': ' . $this->name,

default => $this->name . ' (' . $this->code . ')',

};

}One method. One place to update. All existing calls that use getLabel() with no arguments keep working because the parameter has a default value.

When to Use This

This works when the methods share the same core logic and only differ in formatting, filtering, or a small behavioral switch. If the “variant” method has completely different logic, keep it separate.

The signal to look for: two methods with nearly identical bodies where you keep having to update both. That’s your cue to merge them with an optional parameter.

Bonus: PHP 8’s match() expression makes the branching clean. No messy if/else chains needed.